Inside Claude Code: How Sub-Agents and Parallel Execution Define Next-Gen Coding Agents

Introduction: The Evolution of Coding Agents

Coding agents represent a fundamental shift in how developers interact with their codebases. Unlike traditional autocomplete tools or simple code generation models, modern coding agents operate autonomously across multiple files, maintain context over extended sessions, and can break down complex tasks into manageable subtasks. These systems leverage Large Language Models (LLMs) in sophisticated agentic loops where the model can call tools, observe results, and iteratively work toward task completion.

The journey from simple code completion to full-featured coding agents began with tools like GitHub Copilot, which offered inline suggestions. The next generation brought conversational coding assistants like ChatGPT and early Claude integrations that could generate entire functions or files. Today’s third-generation tools like Claude Code, Cursor, and Windsurf represent true agentic systems capable of autonomous multi-step reasoning, parallel task execution, and complex codebase manipulation. This article unpacks the critical components that make Claude Code uniquely effective, providing both a technical framework and a high-level overview suited for machine learning engineers.

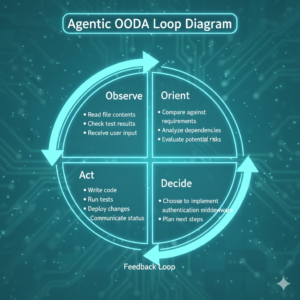

The key to distinguishing a coding agent from a chat-based coding assistant lies in autonomy and tool use. A coding agent operates in a loop: observe the current state, reason about what needs to happen, decide on actions, execute those actions through tools, and repeat. This OODA loop (Observe, Orient, Decide, Act) allows agents to work independently on tasks that might require dozens of file reads, searches, edits, and test runs before completion.

Code Indexing and Search: The Foundation of Agent Intelligence

Before diving into what makes Claude Code exceptional, it’s essential to understand how coding agents find and navigate information in codebases. This capability fundamentally determines an agent’s effectiveness, as no amount of reasoning power matters if the agent cannot locate relevant code.

Approaches to Code Search

Modern coding agents employ three primary strategies for code discovery, each with distinct tradeoffs:

Syntactic indexing: Parsing code to understand file structures, classes, functions, and variables, enabling searches by code constructs rather than just strings.

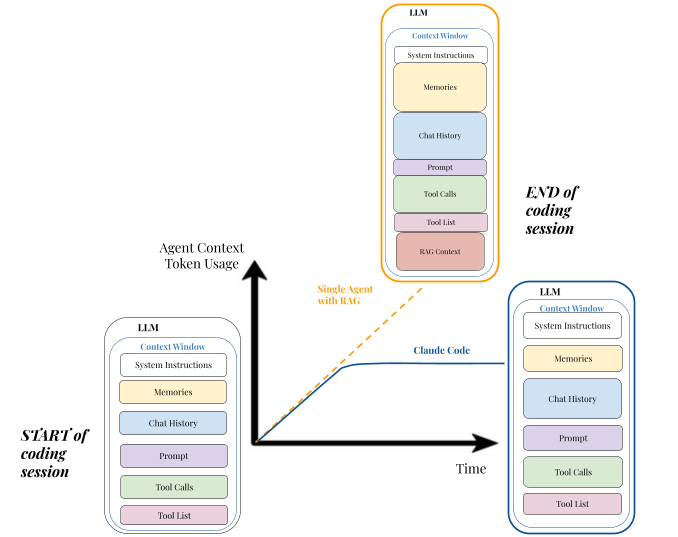

Semantic Search via RAG (Retrieval-Augmented Generation) represents the embedding-based approach. Tools like Cursor and Windsurf create vector embeddings of code chunks, storing them in vector databases for semantic similarity search. When a developer asks “where do we handle user authentication?”, the system converts this query into an embedding and retrieves semantically similar code segments. This approach excels at refactoring or feature discovery by understanding intent and finding conceptually related code even when exact keywords differ. However, RAG systems require significant upfront indexing, consume substantial memory, and can struggle with very large codebases exceeding their embedding coverage.

Pattern-based search with ripgrep takes a fundamentally different approach. Instead of semantic understanding, tools like ripgrep perform blazingly fast regex pattern matching across entire codebases. When searching for function.*authenticate, ripgrep can scan millions of lines in milliseconds. This deterministic approach guarantees you’ll find exact matches and works consistently regardless of codebase size. The limitation lies in requiring developers or agents to know what patterns to search for—you cannot ask ripgrep to “find authentication logic” without translating that intent into concrete patterns.

Sub-agent search delegation represents Claude Code’s innovative approach. Rather than relying solely on pre-indexed embeddings or pattern matching, Claude Code can spawn specialized sub-agents tasked with search missions. These sub-agents receive focused prompts like “find all template rendering code” and autonomously execute multiple search strategies in their own context windows. They might start with broad Glob patterns to identify relevant directories, then use Grep for specific patterns, then read promising files to verify relevance. This dynamic, multi-strategy approach combines the flexibility of semantic understanding with the precision of pattern matching.

Why Claude Code’s Approach Stands Out

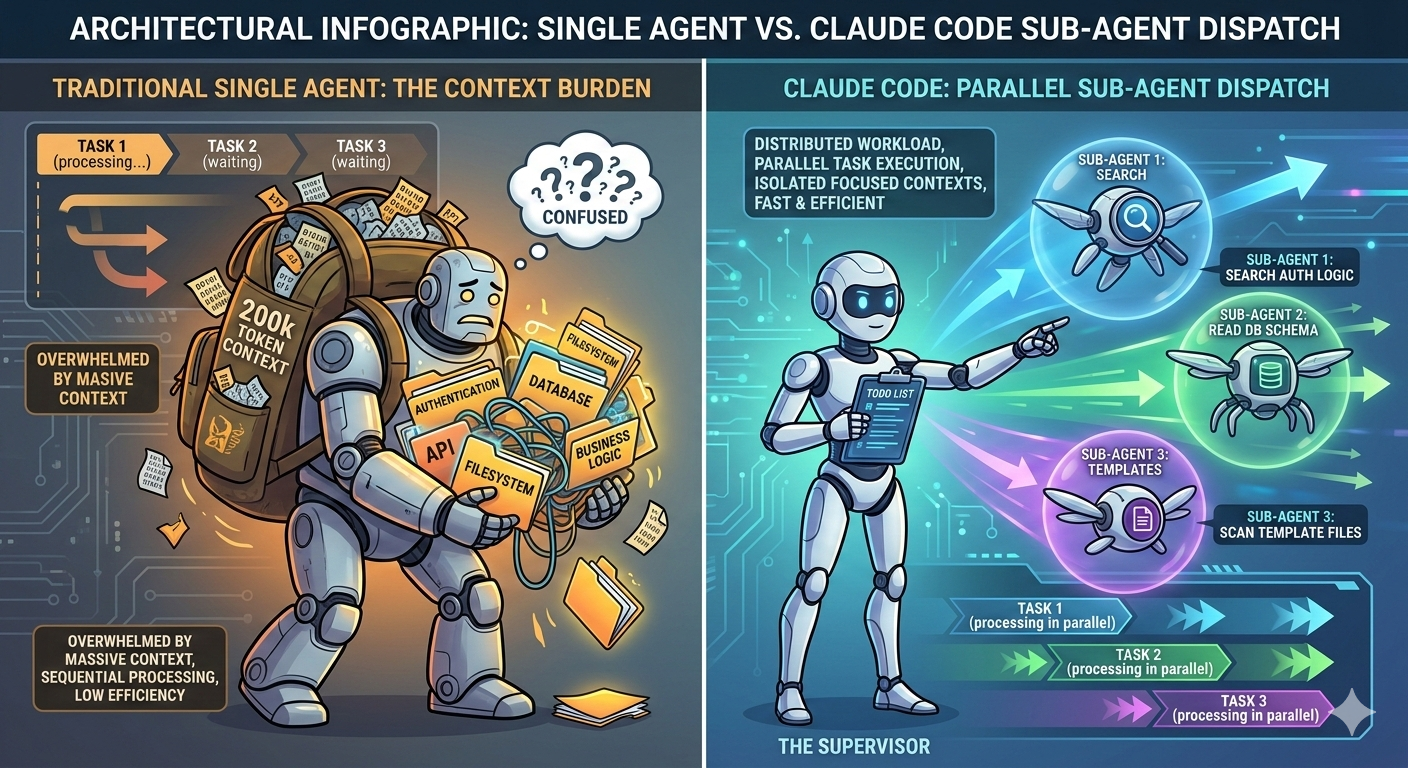

Claude Code’s choice to emphasize ripgrep-based search with sub-agent orchestration over pure RAG reflects a pragmatic engineering decision. While burning more tokens (approximately 15× more than single-agent chat interactions), this architecture avoids the brittleness of embedding-based systems. There’s no indexing delay when switching projects, no memory overhead from vector databases, and no risk of embeddings becoming stale as code evolves. The agent simply searches the current state of the filesystem directly.

More importantly, sub-agents prevent search activities from polluting the main agent’s context window. When a sub-agent investigates twenty candidate files to find the right authentication middleware, those file contents never burden the supervisor agent’s 200,000 token context limit. The sub-agent returns only a concise summary of findings, preserving the supervisor’s ability to maintain strategic oversight of the larger task.

Other features that make Claude Code special include the streaming JSON parser with recovery and smart data truncation when sending data to LLMs or telemetry services. The JSON parser enables elegant handling of common LLM streaming issues such as fragmented JSON output from the LLM while traditional JSON parsers fail on incomplete input.

The Four Pillars of Claude Code’s Architecture

1. Planning with the Todo Tools

Effective agents must be able to articulate their plan before diving into execution. Claude Code implements this through its TodoWrite and TodoRead tools, which create structured task lists visible to both the agent and the developer. This serves multiple critical functions beyond simple organization. Note that current Claude Code versions may no longer have an explicit TodoRead tool but the functionality is rolled into TodoWrite tool.

First, the todo list forces the agent to decompose ambiguous requests into concrete, actionable steps. When asked to “add user authentication,” Claude Code creates todo items like “Create User model with password hashing,” “Implement JWT token generation,” “Add authentication middleware,” and “Write authentication tests.” This decomposition process catches many planning errors early—if the agent cannot articulate specific steps, it likely doesn’t understand the task.

Second, todo items have explicit states: pending, in_progress, and completed. This state tracking keeps the agent grounded in what it has actually accomplished versus what remains. Without this mechanism, agents frequently lose track of progress in long-running sessions, sometimes re-implementing features or skipping critical steps.

Third, the todo system provides transparency to developers. You can see at a glance what Claude Code plans to do next, allowing you to course-correct before the agent heads down an incorrect path. This human-in-the-loop oversight proves essential for complex tasks where requirements may be ambiguous or partially specified.

The todo system also helps agents recover from context truncation. In extremely long sessions exceeding the 200,000 token context limit, early conversation turns get truncated. The todo list persists as a compressed representation of the overall plan, allowing the agent to maintain strategic coherence even as tactical details from earlier in the session fade from active context. This task list provides transparency into Claude’s plan for the user, keeps the agent focused, and supports complex, multi-step workflows with real-time progress visibility.

2. Memory Through Filesystem Persistence

Claude Code treats the filesystem itself as its primary memory system. It reads and writes files actively as it executes tasks, allowing it to store intermediate results and learnings persistently. This design decision, while seemingly simple, enables maintenance of long-running context by extracting and compressing insights from executed code.

The key innovation lies in special files like Agents.md or Claude.md that serve as compressed knowledge bases about a codebase. When Claude Code encounters recurring patterns, makes mistakes, or learns important architectural principles about your project, it can append guidance to these files. Over time, these documents become condensed playbooks capturing institutional knowledge.

For example, after struggling with a particular dependency’s API, Claude Code might add a note: “The DataProcessor class requires explicit cleanup via close() after use. Always wrap in try-finally blocks. See src/utils/processor.py for examples.” Future sessions can read this guidance before working with that dependency, avoiding the same pitfall.

Filesystem-based memory remains local to each project, travels with the repository, and can be version-controlled. Developers can review, edit, and refine these memory files just like any other code artifact. The memory becomes part of the project’s documentation infrastructure rather than an opaque external system.

The filesystem also enables memory across different agent types. When a sub-agent discovers important information during its task, it can write findings to project files that persist beyond that sub-agent’s limited lifetime. The main supervisor agent or future sub-agents can then retrieve this information as needed, which is useful for extended coding sessions and large codebases.

3. Parallel Sub-Agent Dispatch

This represents Claude Code’s most significant architectural innovation. The ability to spawn multiple independent sub-agents that execute subtasks concurrently with their own context windows fundamentally changes what’s computationally feasible.

When Claude Code encounters a task like “document the context passed to each template in this project,” it doesn’t try to sequentially read every template file within its own context. Instead, it spawns multiple sub-agents in parallel: one for index templates, one for database templates, one for table templates, and so on. Each sub-agent receives a narrow, focused prompt containing only the information relevant to its specific subtask.

This architecture provides three critical benefits:

Token efficiency through context isolation. Sub-agents don’t need to carry the full conversation history of the main agent. If the main agent has spent 150,000 tokens discussing various aspects of the project, a sub-agent spawned to search for authentication code doesn’t need any of that history. It receives a focused prompt like “Search this codebase for authentication and authorization logic. Document your findings concisely.” This allows the system to process vastly more information than would fit in any single context window.

Computational speed through parallelization. Attention mechanisms in transformers scale quadratically with sequence length. Processing a 200,000 token context is dramatically slower than processing five 40,000 token contexts. By running sub-agents in parallel, Claude Code can process five search tasks simultaneously, each operating at peak speed with small context windows. This can reduce task completion time by 90% compared to sequential single-agent approaches.

Improved reasoning through focus. A sub-agent attending only to tokens relevant to its narrow task experiences less “noise” from unrelated information. This focused attention leads to more accurate results. As context windows grow beyond 200,000 tokens, models demonstrably become less effective at retrieving and reasoning about information—a phenomenon sometimes called the “lost in the middle” problem. Sub-agents sidestep this by never accumulating massive contexts in the first place.

The sub-agent pattern also enables specialization. Claude Code can dispatch different types of sub-agents optimized for different tasks: a “general-purpose” agent for broad research, specialized agents for code review, and custom agents for project-specific workflows.

4. Comprehensive System Prompts with Rich Examples

While less glamorous than architectural innovations, Claude Code’s detailed system prompts represent months of prompt engineering refinement. These prompts contain extensive guidance on tool usage, including both positive examples (what to do) and negative examples (what to avoid).

Consider the guidance for the Task tool (which spawns sub-agents). The system prompt explicitly states:

“When NOT to use the Agent tool: If you want to read a specific file path, use the Read or Glob tool instead of the Agent tool, to find the match more quickly. If you are searching for code within a specific file or set of 2-3 files, use the Read tool instead of the Agent tool, to find the match more quickly.”

This negative example prevents the agent from over-using sub-agents for trivial tasks where direct tool use would be more efficient. Without such guidance, naive agents spawn sub-agents for simple file reads, wasting tokens and time.

The system prompts also encode sophisticated behavioral patterns like Test-Driven Development. Claude Code is instructed to write failing tests first, implement just enough code to pass those tests, then move to the next feature. This discipline keeps the agent grounded in verifiable progress rather than speculative implementation.

Critical security and safety guidance also lives in system prompts. Bash tool usage includes warnings about validating directory existence before file operations, proper error handling, and avoiding destructive commands without confirmation. These guardrails prevent common failure modes where agents accidentally damage project state.

Why Sub-Agents Matter: A Deeper Look

The effectiveness of Claude Code’s sub-agent architecture deserves deeper examination, as it represents a fundamental shift in how we architect agentic AI systems.

Traditional single-agent systems face an inherent tension: context windows enable them to “see” large amounts of information, but LLMs struggle to effectively utilize that information as context grows (see Needle in Haystack paper). A 200,000 token context window sounds powerful until you realize the model must process quadratically more computations for attention, leading to slower responses and degraded reasoning about information in the middle of that context.

Sub-agents elegantly resolve this tension through a divide-and-conquer strategy. Instead of one agent trying to hold everything in its head, Claude Code maintains a lightweight supervisor that coordinates many focused workers. The supervisor never needs to read the full contents of twenty different files—it just needs to understand the summary reports from sub-agents that did read those files.

This mirrors effective organizational structures in human engineering teams. A tech lead doesn’t personally review every line of code in a large project. They delegate focused reviews to team members, who return with summarized findings and recommendations. The tech lead synthesizes these reports to make architectural decisions without drowning in implementation details. Claude Code’s sub-agent architecture implements this same organizational principle.

The parallel execution aspect cannot be overstated. When Claude Code needs to document template contexts across 47 HTML files, spawning five sub-agents that complete their work in 1-2 minutes each is dramatically different from a single agent sequentially processing 47 files over 10-15 minutes. This isn’t just about developer time—it’s about what becomes computationally feasible. Tasks that would be too expensive or slow with a single agent become practical with parallelization.

Importantly, Claude Code encourages developers to explicitly request sub-agent usage. Simply adding “Use sub-agents” to a request causes Claude Code to more aggressively decompose the task and dispatch parallel workers. This human guidance helps the agent understand when comprehensive breadth-first exploration justifies the token cost.

Claude Code’s Tool Ecosystem

The power of any agentic system depends on its tool ecosystem. Claude Code offers parallel tool execution for read-only tools while write tools are run sequentially for safety. Details on the suspected TypeScript code underlying many these tools can be found here (your mileage may vary). Claude Code provides a sophisticated set of tools that enable comprehensive codebase manipulation:

Core Tools

File manipulation tools (Read, Write, Edit/MultiEdit, NotebookEdit/Read for Jupyter) give the agent full control over the filesystem. The Edit tool deserves special mention—rather than requiring the agent to regenerate entire files, it performs precise string replacements. This dramatically reduces tokens used and eliminates errors from the agent forgetting to include unchanged portions of files. Notebook Edit/Read tools provide Jupyter notebook cell operations with structure preservation. Notice that Claude Code has multiple modular tools instead of one generic file editing tool. Each tool facilitates LLM-friendly guarantees on functionality.

Search and discovery tools (Glob, Grep, LS) provide multiple strategies for finding code. Glob supports pattern-based file discovery (“**/*.ts”), Grep enables content-based search with full regex support, and LS (list directories tool) provides directory exploration. The system prompt carefully guides when to use each tool, preventing inefficient choices like using Grep when Glob would suffice.

Execution tools (Bash) allow the agent to run code, execute tests, and verify its work. The Bash tool includes sophisticated safety guidance (such as sandbox support) about validating paths and handling errors properly. claude-3-5-haiku is used for simpler calls like parsing Bash commands as opposed to calling claude-3-7-sonnet (before Anthropic’s recent October 2025 update to Claude Sonnet 4.5).Claude Code also uses claude-3-5-haiku to evaluate whether the Bash command has any prompt injection security risks. The Bash tool considers whether a read (ls, grep, etc.) vs write (curl, touch, make, npm, etc.) operation needs to be performed to drive sandbox mode decision.

Architectural tools (Architect) let the agent design system architecture before implementation, separating planning from execution. This tool explicitly produces designs without code, preventing premature implementation.

Web tools (WebFetch, WebSearch) enable the agent to consult documentation, search for solutions to errors, and access current information beyond its training data.

IDE Integration Tools

When running within an IDE context, Claude Code gains additional capabilities:

Diagnostics access (getDiagnostics) allows the agent to see compiler errors, linting warnings, and type errors directly. This enables the agent to proactively fix issues rather than waiting for developers to report problems.

Jupyter kernel execution (executeCode) provides a persistent Python environment for data exploration and analysis, enabling data science workflows where the agent can iteratively experiment with code.

The Agent or Task Tool: Gateway to Sub-Agents

The Task tool deserves special attention as the gateway to sub-agent dispatch. It implements hierarchical task decomposition—spawning sub-agents and synthesizing their findings

The tool description specifies exactly which agent types are available (currently “general-purpose” with access to all tools) and describes when to use each. It provides extensive negative examples of when NOT to use sub-agents, preventing the agent from dispatching sub-agents for simple reads or searches that would be faster to do directly.

Critically, the prompt emphasizes parallel dispatch: “Launch multiple agents concurrently whenever possible, to maximize performance; to do that, use a single message with multiple tool uses.” This explicit encouragement of parallelization ensures the agent takes full advantage of Claude Code’s architecture. The prompt also sets clear expectations about sub-agent interaction: each invocation is stateless, returning a single final message. The supervisor cannot have a back-and-forth conversation with a sub-agent, so prompts must be comprehensive and specify exactly what information to return. This constraint forces clear task decomposition.

This feature is novel because it goes beyond simple concatenation of results to smart synthesis through extraction and comparison of key findings across agents, identification of consensus and conflicts while preserving unique insights and removing redundancies. All together, this Task Tool intelligently orchestrates multiple perspectives across sub-agents in a way that was not done before. Dynamically building context across multiple sub-agents is facilitated through priority-based truncation to the most important context, hierarchical loading CLAUDE.md with override semantics and summarization fallbacks.

See the Appendix for all Claude Code System Prompts and Tool here and here.

Claude Code Tool Execution Timing Analysis:

| Tool Type | Concurrency | Typical Latency | Bottleneck |

|---|---|---|---|

| ReadTool | Parallel (10) | 10-50ms | Disk I/O |

| GrepTool | Parallel (10) | 100-500ms | CPU regex |

| WebFetchTool | Parallel (3) | 500-3000ms | Network |

| EditTool | Sequential | 20-100ms | Validation |

| BashTool | Sequential | 50-10000ms | Process exec |

| TaskTool | Parallel (5) | 2000-20000ms | Sub-LLM calls |

Practical Implications

Claude Code’s architecture offers valuable lessons for building agentic systems:

Parallelization requires explicit encouragement. System prompts should explicitly instruct agents to invoke multiple tools simultaneously when subtasks are independent. Sub-agents can be explicitly requested by the user or dynamically defined by the supervisor agent. For new agentic AI systems, developers should keep architectural design simple by sticking to one main control loop (with max one branch) and a single message history where sub-agent results are added as a “tool response”. Keep in mind that adding more agents can make your system brittle, harder to debug and leads to deviation from an improvement trajectory for the end user.

Use smaller models for intermittent tasks to save on latency and tokens. Over half of Claude Code’s LLM calls are made to claude-3-5-haiku model for discrete, specific tasks like parsing web pages, processing git history and summarizing long conversations.

Memory can leverage existing infrastructure. Consider filesystem-based memory that integrates naturally with developer workflows and version control. Use context files (aka Cursor Rules, Claude.md or Agent.md) that capture details that cannot be inferred from the codebase alone in order to more effectively collaborate with the agent and record user preferences.

Agentic Search > RAG. Rather than building complex RAG pipelines, Claude Code leverages ripgrep , jq and glob commands to search codebases. With Agentic Search, the LLM looks at the first 10 lines of the code to understand whether it is relevant before deciding to ignore the file or look at the next 10 lines of code. This aligns with how a developer would approach this task and eliminates technical overhead of keeping the index fresh and deciding on a similarity and reranker function.

Use a mixture of low and high-level tools to align with frequency and accuracy of tool use. Claude Code uses medium-level tools Grep and Glob frequently for most tasks but can also write bash commands for special subtasks using the low-level Bash tool. High level tools such as WebFetch and getDiagnostics are highly deterministic in their purpose to avoid multiple low-level tool calls. Tool descriptions are first-class prompt engineering surfaces. The quality of tool descriptions, including many positive and negative examples and usage guidance (with Markdown headings such as Tone and style and Task Management), dramatically impacts agent behavior. Invest time in refining these descriptions based on observed failure modes. Claude Code uses special XML tags liberally throughout its prompts.

Use a todo list that the agent manages. This approach avoids context rot during long-running tasks through on-demand addition or rejection of new todo items. By referring to the todo list frequently, the agent is kept on track while providing enough flexibility to course-correct when needed.

Context window management is architectural, not just a parameter. Rather than simply requesting larger context windows, design systems that keep contexts focused and delegate breadth to parallel workers. This maintains fast inference times and high reasoning quality.

Transparency builds trust. The todo system and visible sub-agent dispatch give developers insight into agent reasoning, making the system feel less like a black box. This transparency is essential for adoption in professional settings.

Specialization beats generalization for complex tasks. A single agent trying to do everything well inevitably makes suboptimal tradeoffs. Systems that can dispatch specialized agents for specific subtasks deliver better results.

Emerging Patterns

The coding agent space is evolving rapidly, with several emerging patterns worth watching:

Increased adoption of both user-defined and on-the-fly sub-agent architectures throughout B2C applications and enterprise workflows outside of coding agents. Current examples include Manus, poke.com and Microsoft Amplifier, which spawn new sub-agents for addressing user goals.

Shift towards deeper self-improvement agentic loops where agents analyze their own failures and update their instruction sets promise agents that become more capable over time without human intervention. There is rise in agentic loop design deviating from shallow, sequential architecture towards Deep Agents (term popularized by LangChain) to handle user goals.

Claude Code has a number of interesting parts that elegantly bridges AI reasoning with complex system execution and makes it a delight to work with. Many developers find Claude Code objectively better to use compared to Cursor or Github Copilot even with the same underlying model and we’ve covered some of the reasons behind this. It leverages what LLMs are truly good at while covering for LLM’s typical failure modes like difficulty with debugging errors and evaluating performance.

Conclusion

The ‘secret sauce’ of Claude Code is magical and enables users to better understand how to use it and understand why Claude Code does things the way it does. as well design smarter, more efficient agentic systems. For teams building their own agentic systems, Claude Code offers a proven architectural pattern: invest in clear task decomposition, embrace parallelization, keep individual contexts focused, and build memory systems that integrate naturally with existing workflows. These principles apply beyond coding agents to any domain requiring autonomous multi-step reasoning over large information spaces.

Claude Code’s effectiveness as a coding agent stems from a carefully designed architecture that addresses fundamental challenges in agentic AI systems. Its todo-based planning provides structure and transparency. Filesystem-based memory enables long-term learning without complex infrastructure. Parallel sub-agent dispatch solves the context window scaling problem while dramatically improving speed.

Most importantly, Claude Code demonstrates that the future of coding agents lies not in ever-larger single-agent context windows, but in sophisticated multi-agent architectures that mirror how human engineering teams organize themselves. By distributing cognitive load across specialized workers coordinated by a lightweight supervisor, these systems achieve both breadth and depth of reasoning that single-agent approaches cannot match.

For teams building their own agentic systems, Claude Code offers a proven architectural pattern: invest in clear task decomposition, embrace parallelization, keep individual contexts focused, and build memory systems that integrate naturally with existing workflows. These principles apply beyond coding agents to any domain requiring autonomous multi-step reasoning over large information spaces.

References

- https://blog.langchain.com/how-to-turn-claude-code-into-a-domain-specific-coding-agent/

- https://blog.langchain.com/deep-agents/

- https://www.linkedin.com/posts/omarsar_how-do-you-build-effective-ai-agents-this-activity-7379254289296310272-NaMQ?utm_source=share&utm_medium=member_ios&rcm=ACoAAAFNMz8BE87j5PwRnD0BdIfreFdiiediDJY

- https://www.reddit.com/r/ChatGPTCoding/comments/1m5uloy/are_there_any_real_benefits_in_using_terminalcli/?rdt=54511

- https://claudelog.com/faqs/what-is-todo-list-in-claude-code/?ref=blog.langchain.com

- https://github.com/langchain-ai/claude-code-evals/tree/main

- https://simonwillison.net/2025/Oct/11/sub-agents/

- https://blog.fsck.com/2025/10/09/superpowers/

- https://www.anthropic.com/engineering/multi-agent-research-system

- https://simonwillison.net/2025/Jun/14/multi-agent-research-system/

- https://martinschroder.substack.com/p/behind-the-scenes-of-claude-code

- https://github.com/matthew-lim-matthew-lim/claude-code-system-prompt/blob/main/claudecode.md

- https://www.philschmid.de/the-rise-of-subagents

- https://www.philschmid.de/agents-2.0-deep-agents

- https://minusx.ai/blog/decoding-claude-code/